NEON Data Management

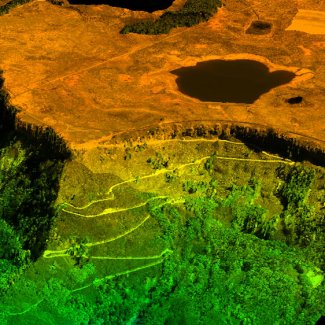

Lidar data showing Grand Mesa

NEON relies on computing software and hardware to manage thousands of sensors, billions of data points, and terabytes of output data. Sensors and technicians collect data from sites spread across the nation. The cyber infrastructure team coordinates the transfer of data from field sites to NEON's central data center.

Working with science and engineering staff, the team 1) standardizes and automates data collection and processing tasks; 2) stores and processes data; and 3) develops relevant operational tools, such as monitoring, alerting, and mobile applications. Special attention is also paid to how data are documented, through human- and machine-readable formats.

NEON scientists collaborate with cyber infrastructure staff to create data processing algorithms and frameworks that

- Collect and centralize data from thousands of sensors and hundreds of field scientists;

- Process incoming data to create derived data products;

- Assess the quality and integrity of data products; and

- Deliver optimized, useable, high-value data products.

For example, NEON flags sensor-derived data that are out of normal range or implausible, such as a species size measurement outside of the known range. NEON also conducts random recounts, crosschecks collected data with existing data and reconciles conflicting data using documented quality-control methods.

Click on any of the topics below, or scroll down to learn more about data storage, software development, and documentation for data interoperability.

Data Processing

Providing standardized, quality-assured data products is essential to NEON's mission of providing open data to support greater understanding of complex ecological processes at local, regional and continental scales.

Data Quality

NEON has strong data quality standards and procedures to endure data are fit for many research projects.

Externally Hosted Data

Numerous NEON data products are hosted at external repositories that best support specialized data, such as surface-atmosphere fluxes of carbon, water, and energy, and DNA sequences.